NEURAL VISION

What does a neural network see when it sees you?

Frozen Moments of Machine Perception

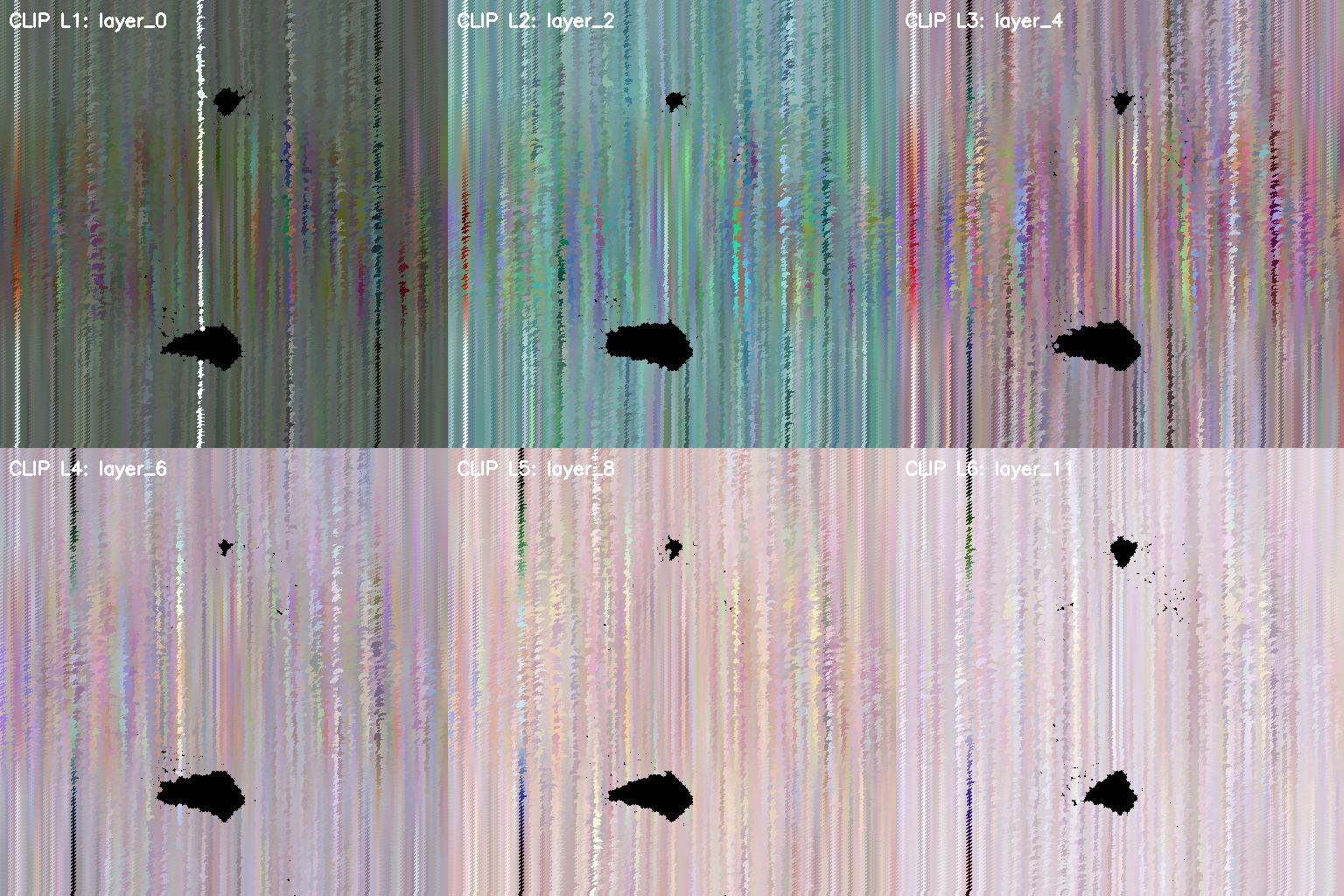

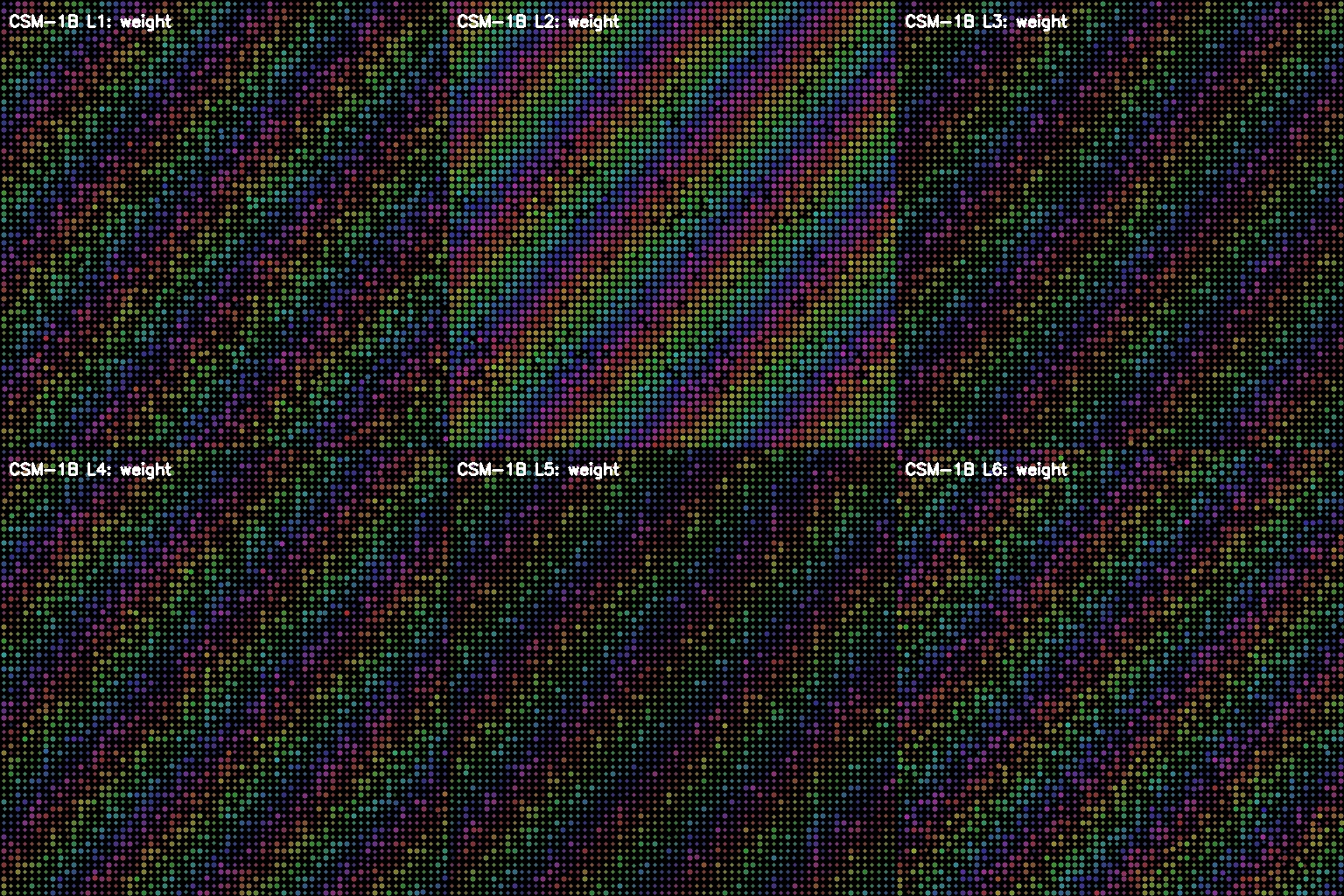

These are visual snapshots captured mid-thought—neural activations extracted from the hidden layers of deep learning models and transformed into art. Each image is a window into how machines decode reality, translating pixels into patterns, edges into meaning, chaos into understanding.

Every image began as light hitting a sensor. But somewhere between input and output, the neural network transforms that light into abstract representations—patterns we can extract, freeze, and visualize as something entirely new.

(3, 224, 224)• Normalize:

μ=[0.485,0.456,0.406]• Audio:

n_mels=128, sr=22050Hz

ResNet18 | CSM-1B• Layers:

conv1 → layer1...layer4• Activation:

f(x) = max(0, x)

register_forward_hook()• Outputs:

(B, C, H, W) tensors• Layers: 6 convolutional blocks

8 render pipelines• Normalize:

(x - min) / (max - min)• Output:

RGB uint8 [0-255]

Extracting the In-Between

Neural Vision captures the moments before a model makes its decision—the raw, unfiltered activations that exist in the hidden layers. What we see is normally invisible: billions of neurons firing, transforming input into understanding. By extracting these activation maps and rendering them as visual art, we reveal the alien beauty of machine perception.

Built With PyTorch & Flask

Full-stack application combining deep learning visualization with real-time audio processing. Flask backend handles model inference while WebAudio API enables live microphone capture and waveform display.

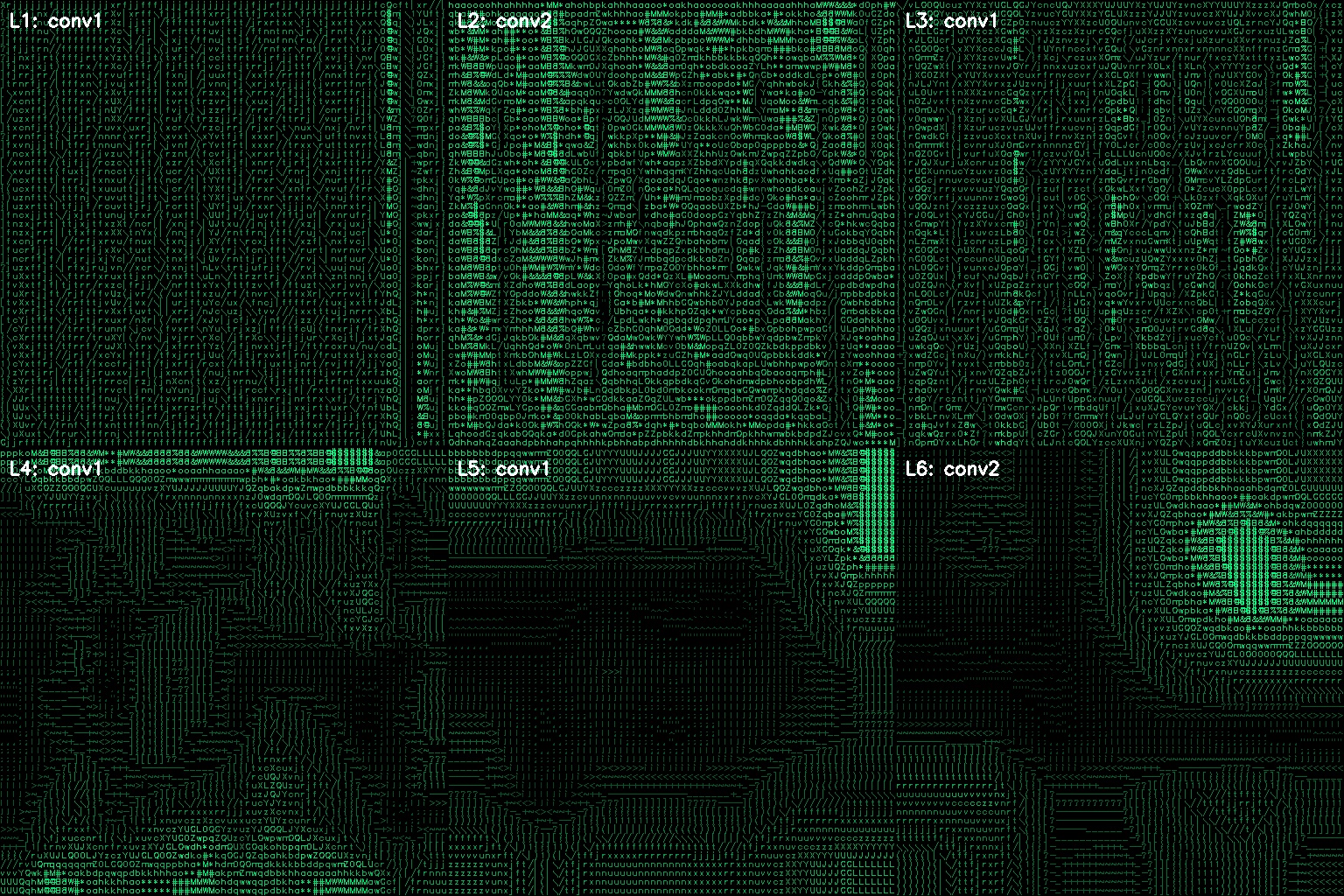

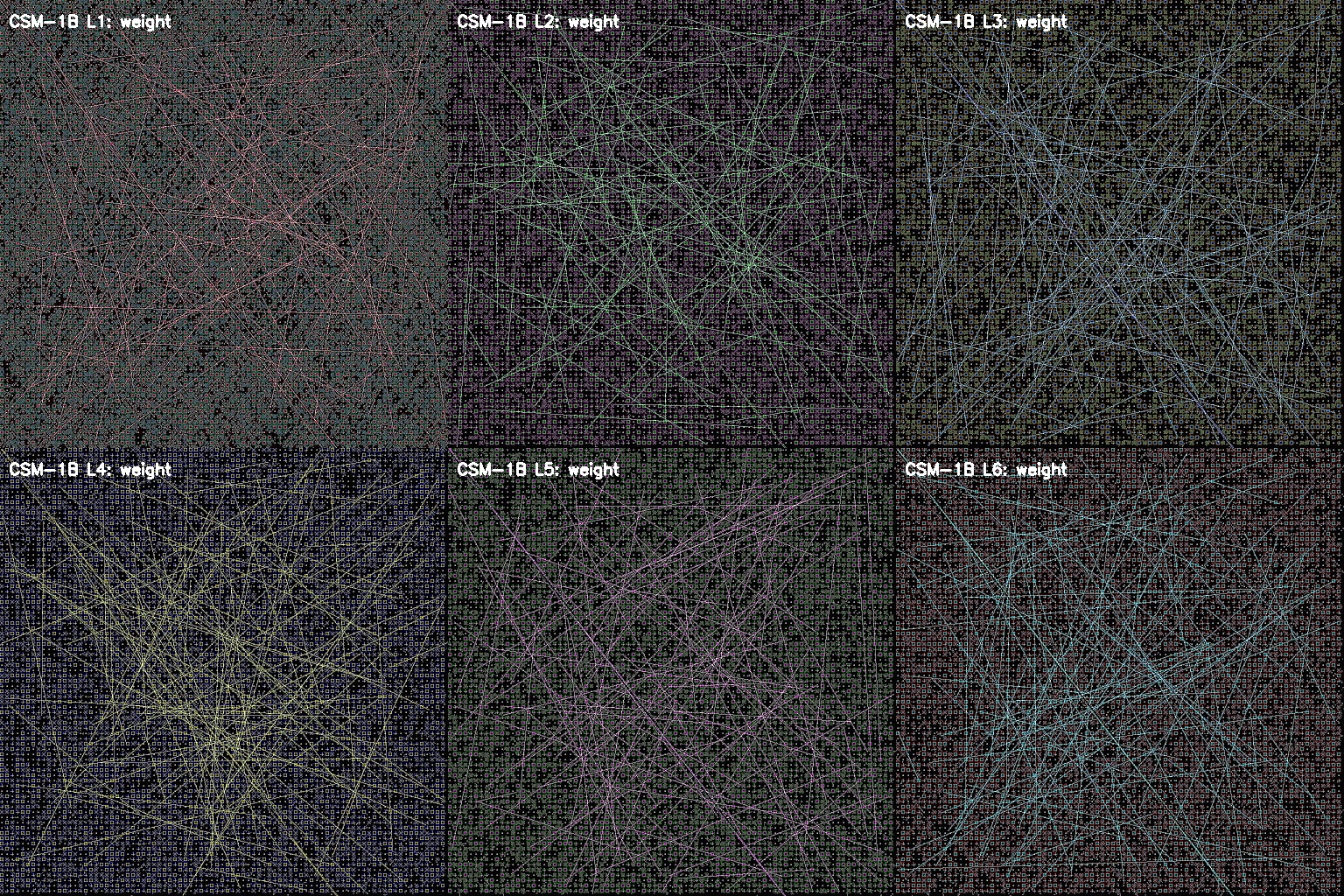

Progressive Feature Extraction

Neural Vision captures activations from 6 key convolutional layers, showing how the network builds increasingly complex representations from simple edges to high-level semantic features.

Educational & Creative Tool

Neural Vision makes deep learning interpretable and accessible. Perfect for understanding how convolutional neural networks process visual and audio information, teaching AI concepts, or creating generative art from neural activations.

Neural Vision FAQ

What is Neural Vision?

Neural Vision is an interactive tool that extracts hidden-layer activations from deep learning models and renders them as visual art. It reveals how convolutional neural networks perceive and process images and audio.

Does Neural Vision require a GPU?

No. Neural Vision runs entirely in the browser using pre-computed activations served from a Flask backend. The server handles PyTorch inference, so users need only a modern web browser.

What CNN concepts does Neural Vision demonstrate?

Neural Vision demonstrates convolutional feature extraction, activation maps, forward hooks, progressive layer abstraction from edges to semantics, and 8 distinct rendering pipelines for visualizing internal network states.

Can Neural Vision process audio inputs?

Yes. Audio is converted to mel-spectrograms using Librosa at 22050 Hz sample rate with 128 mel bands, then fed through the same convolutional pipeline as images for visualization.

Is Neural Vision available to use online?

Neural Vision is currently a research and development project. A public demo is planned. The codebase uses Flask, PyTorch, OpenCV, and WebAudio API for real-time processing.